Jean-François (Jeff) Van de Poël – Ongoing reflection project, status update June 2025

This work is licensed under CC BY-NC-SA 4.0

AI can be a supportive tool with considerable potential, but its use without a framework can also have adverse effects on cognition and pedagogy. Below, we address three key issues: the illusion of mastery, the role of AI as an educational tutor, and the impact on academic output (homework, assignments) between legitimate assistance and problematic substitution.

Illusion of control and the need for critical thinking

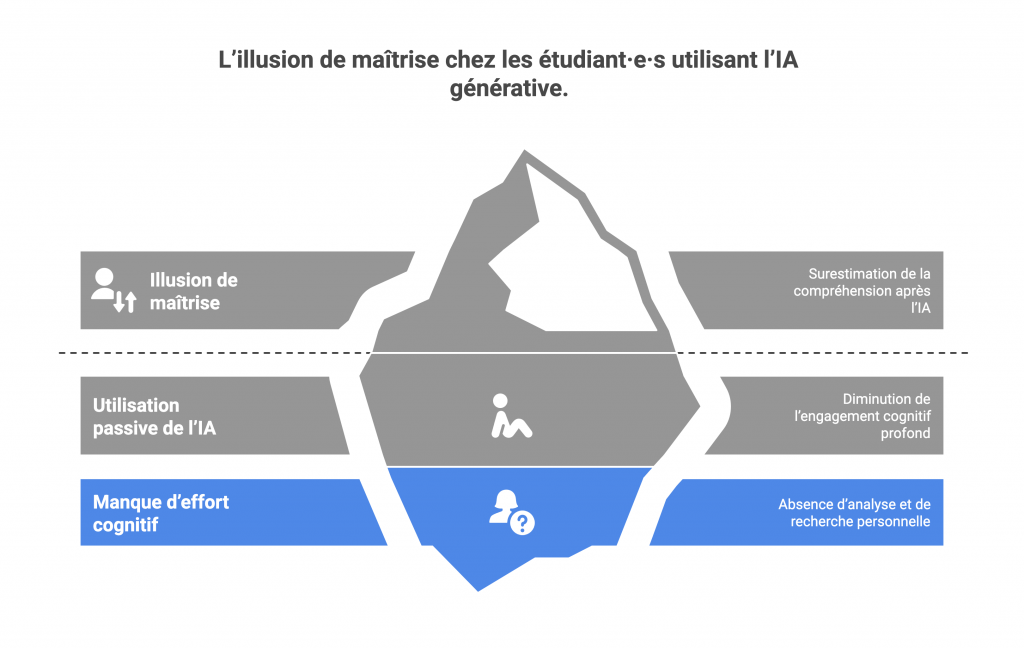

One of the first pitfalls observed is the risk of an illusion of mastery among students using generative AI. This phenomenon, sometimes called the “feeling of knowing” (Biggs, 1959), refers to the tendency to overestimate one’s own understanding after easily obtaining a well-formulated answer from AI.

Indeed, when faced with a fluent and coherent explanation provided by a chatbot, a student may feel that they know and have understood the concept, when in reality they have not invested the cognitive effort required to truly appropriate the notion. AI can thus reinforce a false sense of competence: the learner believes they have mastered the subject because they have an immediate answer, without having had to analyse or search for themselves.

In practical terms, this passive use of AI risks reducing engagement in deep cognitive processes that are essential for sustainable learning.

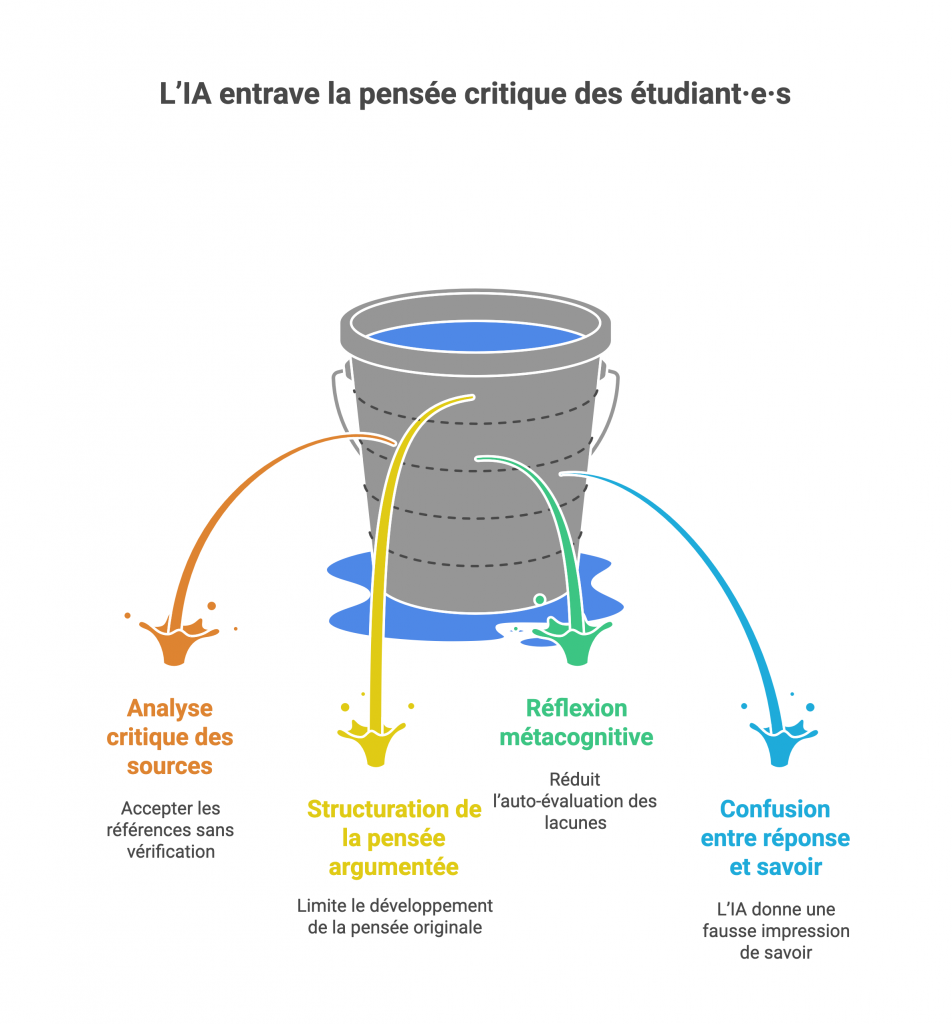

Among the processes that AI could bypass are:

- Critical analysis of sources. If AI provides an answer with references, the student may be tempted to accept them without verification. Yet it is well known that AI can generate false references or inaccurate citations mixed with real ones. Without a systematic verification process on the part of the learner, the risk is to anchor erroneous or unfounded knowledge. Critical thinking must prompt the question: where does this information come from? is it corroborated elsewhere?

- Structuring reasoned thought. By offering ready-made explanations or “turnkey” arguments, AI can limit the development of students’ critical and original thinking. If every question yields a neatly packaged essay, we deprive ourselves of the intellectual exercise of building our own reasoning, weighing the pros and cons, and finding our own examples. In the long run, this can reduce the ability to argue autonomously and innovatively.

- Metacognitive reflection on learning. Learning is reinforced when students take the time to reflect on their own understanding: what don’t I understand? how can I improve? If AI immediately provides an answer, the learner is less likely to practise this self-assessment of their gaps. The habit of ease can erode the ability to identify what one does not know and to plan learning strategies. Thus, AI can give students the illusion of having acquired knowledge when no real reasoning has actually taken place within them.

- The danger is confusing “answer obtained” with “knowledge acquired”. This is why it is essential to train users (students and teachers) in a conscious and controlled use of AI, adopting a stance of systematic verification and questioning.

- In practice, this means: encouraging students to doubt AI’s answers, to check their reliability (for example by looking up the references provided), and to use them as a starting point for reflection rather than as absolute truth.

- The use of AI must therefore be accompanied by metacognition: the student should ask themselves how has AI helped me, and what might I have missed without my own critical thinking?

In short, to avoid the illusion of mastery, the teacher plays a key role: that of guardian of critical thinking. Students must be made aware of AI’s limits, trained to validate information (cross-checking with course material or other sources), and engaged in activities that force them to go beyond simply copying the machine’s answer (see following sections). AI must be presented as a support tool and not as an infallible oracle. This critical-thinking education about AI aligns with the concept of AI literacy, which aims to give everyone the keys to using these tools intelligently (Anders, 2023).

AI as an educational support tool (virtual tutor)

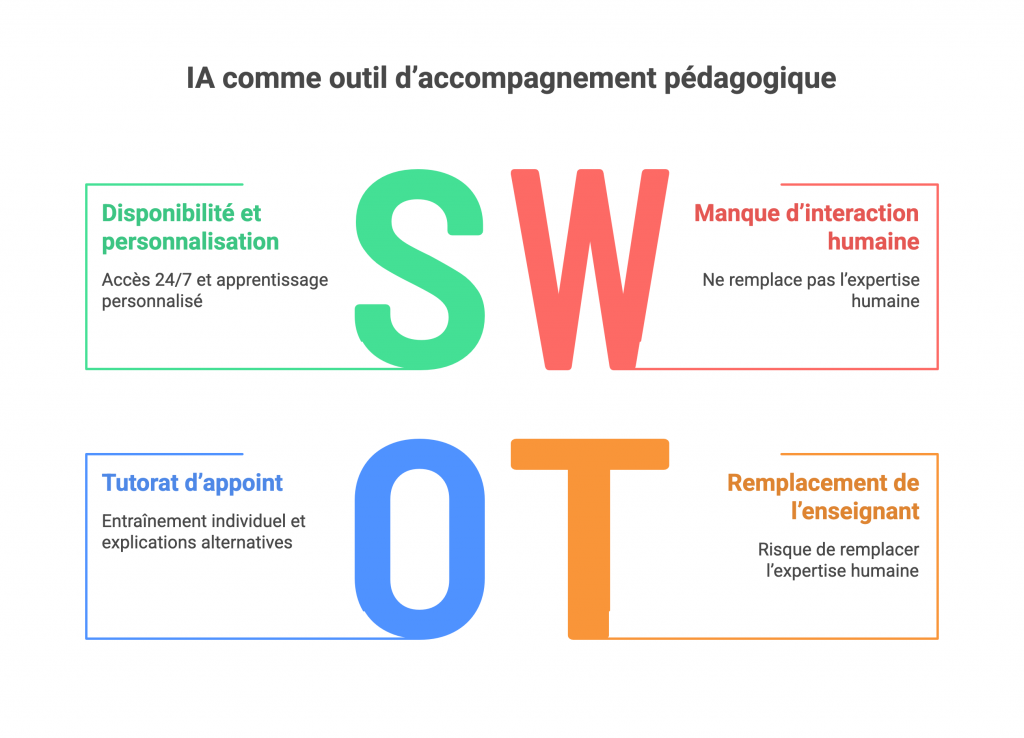

Another emerging use of AI in education is as a virtual tutor or teaching assistant. In theory, a sophisticated conversational agent can be available 24 hours a day to answer students’ questions, re-explain a concept, provide additional examples, or even guide the learner step by step through the process of solving a problem.

This echoes psychologist Vygotsky’s ideas on the zone of proximal development: a tool capable of providing assistance just above the student’s current level could help them progress. Generative AI has the potential to offer a form of assistance: it can provide explanations, answer questions, structure a learning path, and even help students produce study and revision materials (flashcards, summaries).

Ideally, one could imagine a personalised chatbot-tutor for each student, filling certain gaps instantly or offering tailored exercises. However, this attractive vision of a “mentor” AI must be strongly tempered by the reality of its pedagogical and socio-affective limitations.

In practice, several risks arise when using AI as a tutor:

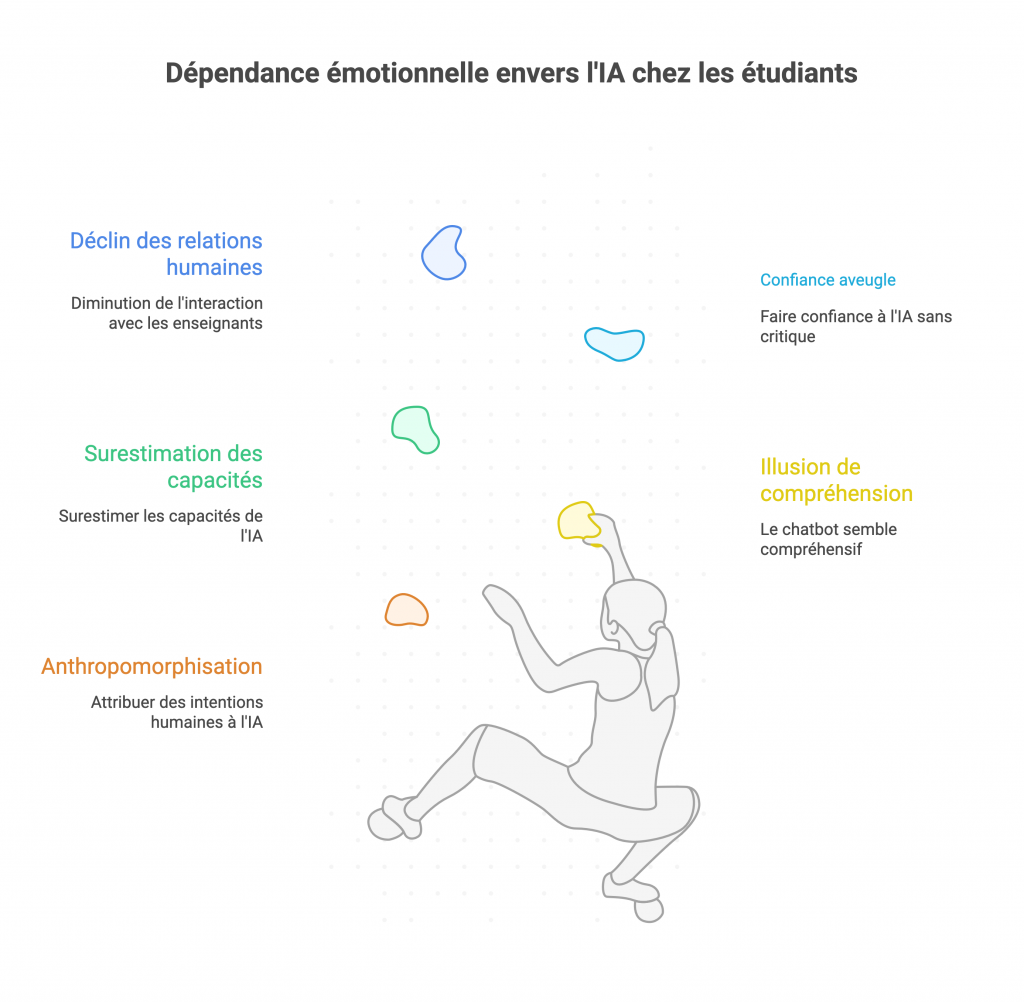

- Anthropomorphisation and emotional dependence. Students tend to anthropomorphise AI, that is, to attribute intentions or intelligence to it that it does not have. A polite and encouraging chatbot can create the illusion of a sympathetic interlocutor. Some students may then develop a socio-affective dependence on AI, perceiving it as a benevolent mentor, when it is merely an algorithm without consciousness or genuine empathy. This illusion of a pedagogical relationship can lead to overestimating AI’s capabilities and placing blind trust in it, to the detriment of the relationship with the human teacher.

- Reduction of human interactions. If AI answers all questions, students may less often turn to their teacher or discuss with their peers. Yet we know that human pedagogical dialogue — with teachers or in groups with peers — is essential for deep learning. Replacing these rich exchanges with AI interactions risks impoverishing the interactive and reflective dimension of the learning process.

- Par exemple, un étudiant qui prépare un examen ne posera plus de questions en cours ou ne participera plus au forum de discussion du fait qu’il obtient déjà toutes ses réponses via un chatbot ; il se prive ainsi des éclairages supplémentaires, des débats d’idées et du feedback personnalisé qu’un enseignant ou d’autres apprenants pourraient lui apporter.

- Decreased frustration tolerance. A human teacher knows that students sometimes need to be challenged, pushed to search for themselves, at the risk of being wrong and learning from their mistakes. Managing this productive frustration is part of the educational process (we also learn by stumbling on a problem). AI, by contrast, tends to provide immediate answers and smooth over difficulties. By constantly obtaining instant solutions, students may lose the habit of persevering when faced with a complex concept. Their cognitive resilience and capacity to sustain effort may diminish, which is problematic when tackling demanding tasks that require patience and reflection.

- Disappearance of cognitive conflict. Socioconstructivist theories of learning emphasise the importance of cognitive conflict: it is often by confronting different ideas, debating, and being wrong that we build new, more solid knowledge. However, conversational AI, by design, generally seeks to provide a concise, neutral and uncontroversial answer (it avoids taking sides or expressing strong disagreement, unless explicitly asked). This means that AI often produces consensual answers that do not provoke contradiction. A student who settles for exchanges with AI will not experience this healthy confrontation of ideas that they would have had debating with a teacher or a peer with a diverging viewpoint. The risk is that they believe they have a complete understanding of the subject when they have not had to question their own initial conceptions, for lack of contradiction from AI. In sum, AI can smooth over debates and give a misleading impression of “tidy” knowledge, whereas learning often benefits from being a somewhat chaotic process of disagreements and reconsiderations.

Despite these risks, AI can play a useful supporting role if its use is well framed. The idea is not to let it entirely replace the teacher or the group, but to use it as a complement. For example, a chatbot could serve as a back-up tutor for individual training: the student practises exercises with AI, which explains their mistakes, then the teacher holds a debriefing in class to revisit misunderstood points and bring human expertise. Likewise, AI could provide alternative explanations to those of the teacher (to reach different sensibilities), which would then be discussed in class. The key is to keep the teacher in the role of orchestrator: it is the teacher who steers the activity, validates or corrects AI’s contributions, and ensures pedagogical quality. Students must also be prepared to use an AI tutor critically: for example, encouraging the student to challenge AI (“what would happen if…?”, “could you explain it differently?”) rather than accepting the first answer, or asking them to compare AI’s answer with that of a peer to see whether there are differences. In this way, one can try to reintroduce cognitive conflict and human interaction around the AI tool. In summary, AI as a pedagogical support tool offers advantages (availability, personalisation, variety of examples), but must not supplant human interaction or exempt one from the effort of reflection. Its use requires a balanced dosage, keeping in mind that a chatbot, however sophisticated, lacks the richness of a human being in the act of teaching. The socio-affective role of the teacher — their capacity to inspire, motivate, and correct with empathy — remains irreplaceable.

AI and academic production: between assistance and substitution

Un troisième impact de l’IA dans l’enseignement supérieur concerne la production des travaux académiques (devoirs, rapports, mémoires, etc.) par les étudiant·e·s. En effet, ChatGPT et consorts peuvent désormais générer des dissertations, des codes informatiques, des résumés, etc., avec une certaine aisance que certain·e·s étudiant·e·s sont tenté·e·s de les utiliser pour faire (ou aider à faire) leurs travaux écrits. Cela pose toute une série de questions pédagogiques et éthiques :

quel apprentissage si l’IA fait le travail à la place de l’étudiant·e ?

- Comment évaluer la part de l’étudiant·e ?

- Est-ce du plagiat ou de la triche ?

- Faut-il l’interdire ou l’autoriser ?

Dans cette section, nous examinerons d’abord les risques cognitifs d’une délégation excessive à l’IA, puis les enjeux d’intégrité académique, avant de voir quelles stratégies on peut adopter pour trouver un équilibre entre usage bénéfique et usage problématique de l’IA dans les travaux étudiants.

- Cognitive risks and “delegation” of intellectual work. Entrusting AI with writing an assignment in one’s place may seem like a time-saver, but it is above all a missed learning opportunity for the student. By letting AI do the work, the student gives up part of the intellectual process targeted by the exercise.

- Intellectual passivity and non-internalisation of knowledge. When AI writes a dissertation or an essay, the student does not appropriate the concepts mobilised. They have not had to reformulate in their own words, to search for information, to select and then synthesise it. Yet it is precisely by making this effort of reformulation and structuring that one consolidates one’s knowledge. AI provides a finished product, but the student has not travelled the path of thought that builds knowledge. This leads to a decline in critical thinking, as the student accepts the generated text without questioning it. One also observes a deficit in knowledge acquisition: not having actively manipulated ideas means risking forgetting them quickly or being unable to mobilise them in a different context.

- By constantly delegating writing, students lose practice in fundamental skills such as documentary research, information analysis and synthesis, or the logical structuring of a written piece. Yet these writing skills are essential for structuring and conveying a line of reasoning, and prove valuable well beyond university. In short, using AI as a cognitive shortcut deprives students of the very exercise that was the pedagogical aim of the assessment.

- The “plausibility factory” and the risk of undetected errors. Generative AI models show capabilities that can be impressive when producing fluent and plausible text. However, plausible does not mean correct. AI can weave subtle errors into an assignment (incorrect dates, biased interpretations, wrong calculations) while maintaining a convincing style. A student unfamiliar with the subject might not spot these errors and submit work containing untruths. In doing so, they incorporate those errors into their own understanding of the subject, validating them simply by having used them. This is the risk of cognitive contamination: by repeatedly reading and reworking a flawed text to polish it, one ends up believing its content. AI is a plausibility factory: it will always give you something that “looks” true. This requires all the more vigilance on the part of the student (and the teacher who will grade the work) to detect the hidden error. A student relying on AI must therefore be trained to read critically what AI produces, which is no small matter when one is starting out in a field.

- Compromising the integrity of learning. Ethically, if a piece of work is submitted as the student’s own when it has largely been written by AI, we can speak of fraud or at the very least academic dishonesty. This raises the question: does a text produced by AI and used as-is constitute plagiarism or cheating? Current academic rules have not all anticipated this situation. It is becoming urgent for higher-education institutions to clarify their position on the matter. At a minimum, it seems necessary to distinguish legitimate uses (for example, using AI as a source of inspiration, to proofread, or to brainstorm ideas) from problematic uses (for example, generating an entire assignment and submitting it as one’s own production). Without this, students move forward on unclear ground, with some thinking they are doing no wrong by leaning “a lot” on AI, while others may unduly feel guilty for a simple spell-checking use.

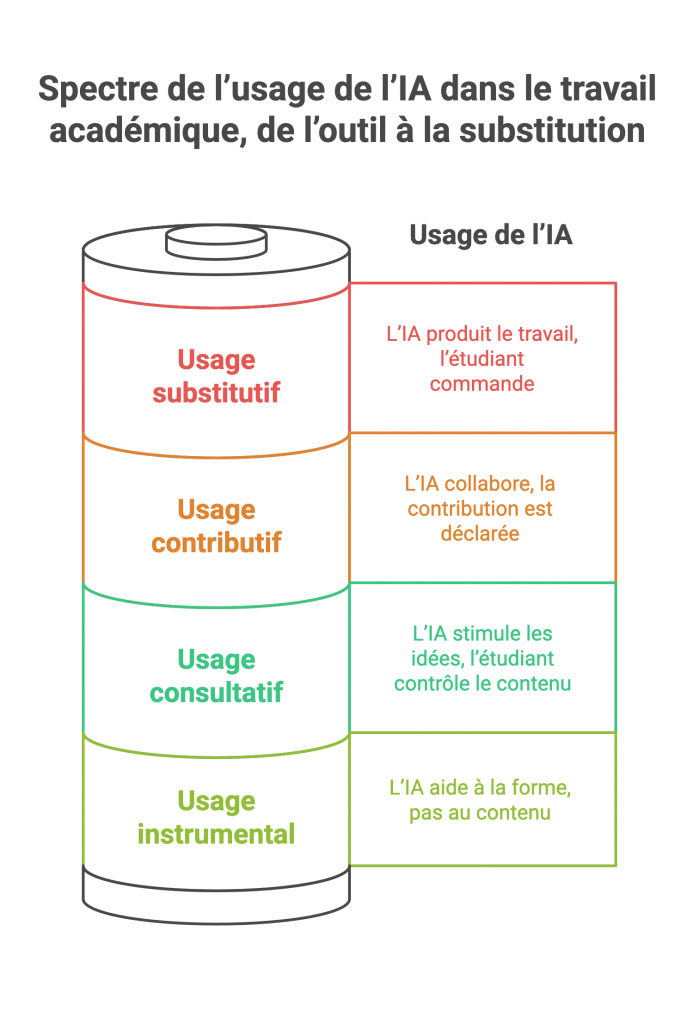

The above points show that the boundaries between original creation, assistance and cognitive delegation are becoming blurred in the age of AI. Rather than thinking in terms of all or nothing (either allowing AI completely or banning it entirely), it makes more sense to adopt a nuanced approach. Experts propose developing a gradation of AI uses in academic work.

For example, we can distinguish between:

- Usage instrumental: AI is used as a technical tool without impact on the intellectual content of the work.

Examples: correcting spelling/grammar, formatting a text, verifying references. This is comparable to software such as Grammarly or Antidote, which does not bring ideas but merely helps with form.

This use is generally considered acceptable, in the same way as using an automatic proofreader. - Usage consultative: AI serves as a thinking partner to stimulate ideas, without substituting for the author.

For example, one discusses with ChatGPT to gather leads on a topic, or asks questions to clarify a concept, but in the end the student writes the final text with their own words and ideas.

Here, AI is a kind of intellectual sparring partner, which can be seen as a tolerable use (some will even see it as a form of learning) as long as the student remains in control of the content. - Usage contributive: AI is an acknowledged collaborator, generating a significant share of the content, but this contribution is framed and declared.

For example, a student may ask AI to write an explanatory paragraph that they will then include in their assignment, clearly signalling it (“paragraph generated with such-and-such tool, reviewed by myself”).

This can be likened to co-authorship between the student and AI. It is of course a sensitive area: we move beyond the purely personal exercise, but if done with transparency and oversight (and, for example, authorised by the teacher for this specific exercise), it could be accepted in certain contexts. The assessment would then need to be adapted accordingly. - Usage substitutive: AI is the main producer of the work, with the student roughly limited to being the commissioner. This is the case of an assignment almost entirely written by ChatGPT and submitted as-is (or only very slightly reworked) by the student. Here there is clearly a delegation of intellectual work: the student has not accomplished the expected cognitive task, which raises a pedagogical problem (no real learning) and an ethical one (a form of cheating). This use must be considered unacceptable in the context of a graded assignment, just like classic plagiarism.

Between these categories, one can imagine gradients. The important thing is to explicitly set the limits in each context (each course, each institution): what is permitted, what is not. For example, a teacher may say to their students: “For this assignment, you may use ChatGPT to help you find ideas (consultative use), but any generated passage must be rewritten in your own words and it is forbidden to leave it as-is (no substitutive use).” Another teacher may allow contributive use on condition of appending the AI-generated draft and writing a reflection on how AI was used. Yet another may choose to prohibit AI entirely for a given assignment (including instrumental use) if the goal is to assess the student’s raw ability without help.